AI Can’t Fix a Broken Design

Why better design beats faster differentiation

Last Thursday morning, about three hours before I was due to present to the AiEdCoP, I discovered I’d hardcoded my API key directly into the frontend of the UDL tool I was planning to demo. I didn’t ever think I was going to write that sentence, but I’ve since learnt that it’s a bad thing. Imagine leaving your house key taped to the front door with a note saying “key is here.” I had to rebuild the whole thing from scratch. I got there, just about, and I’ll write up how I did it in a later post. But it is perhaps fitting that a session about the limits of AI started with me being humbled by a fairly basic rookie mistake.

Anyway. The session.

Ask most educators how AI could support diverse learners, and differentiation comes up almost immediately. Simpler text for struggling readers. Alternative versions of tasks. Visual summaries. It’s a reasonable answer, and it reflects exactly what Bee Shaw and I found when we surveyed over 100 New Zealand educators for our study published in the Journal of Technology and Teacher Education last year (you’ll find the pre-print on another post here). When asked how AI could best support inclusive practice, reducing admin to free up face-to-face time came first, creating engaging content second, and differentiation of resources third.

That’s not a bad list. But it’s an almost entirely reactive one. And I’ve been sitting with the question it raises: educators are being sold specific tools to help them differentiate, but what if differentiation, even AI-assisted differentiation, isn’t actually the goal?

The differentiation trap

When I was working in initial teacher education, differentiation was one of those skills that trainee teachers knew they needed but couldn’t quite get hold of. It had an almost mythological quality to it. I used to think of it a bit like the early scenes in Harry Potter where the students are trying to cast spells and mostly just end up making frogs explode. They know what they’re aiming for, they’ve been told it’s important, but the gap between the idea and the actual execution is enormous.

And to be fair, differentiation is genuinely hard. When you’re standing in front of a class with a wide spread of abilities, needs, and preferences, adapting your approach in the moment is good teaching. It’s responsive, it’s human, and it matters. AI is actually useful here. It can generate a simplified version of a text, produce an alternative explanation, create a visual summary, and do it in minutes rather than the hour wrestling with the printer or photocopier on a Monday morning with the rest of the staff.

But here’s the thing: differentiation, by its nature, responds to variability after the fact. A learner struggles, or we anticipate they might, and we adjust. The design itself stays the same. We add an alternative, a support, a workaround. AI makes that work faster and more varied. But it doesn’t change the underlying question we’re asking, which is: how do we fix this for that learner?

Universal Design for Learning asks a different question entirely. It asks: have we designed for variability from the start?

What UDL actually means

I’ve written about UDL before, back in 2014, so this isn't a new argument. What's new is having AI good enough to generate an image that makes the point for me. The ramp in the image above isn't a workaround bolted on after someone complained. It's architecturally part of the building, the same concrete, the same curve, the same intention. That's what designing for variability from the start actually looks like.

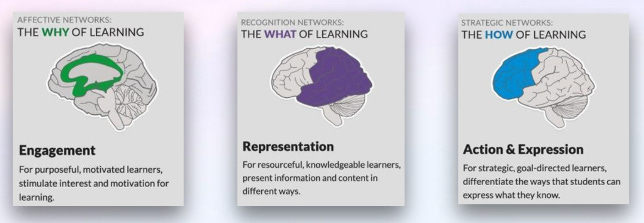

UDL applies the same logic to learning. CAST describes three networks that govern how we learn. The affective network governs the why: what motivates and engages us. The recognition network governs the what: how we perceive and make sense of information. The strategic network governs the how: how we plan, organise, and express what we know. UDL asks educators to anticipate variability across all three before they design, not as add-ons for some learners, but as the default starting point for everyone.

The distinction matters enormously when you think about how AI fits in. AI applied to a differentiation model makes individual fixes faster. AI applied to a UDL model makes the design better for everyone, before anyone has to struggle.

Two tools for a different question

If the argument for UDL is convincing but the practice feels abstract, that’s where I wanted to meet educators with something concrete. Over the past couple of years I’ve built two tools designed to shift the conversation from differentiation toward UDL, one that started as something for beginning teachers trying to get their head around inclusive design, and one that came out of the Ako Aotearoa research work.

The first is a UDL Custom GPT I built back in 2024 (it still feels strange to write that). I originally made it for RTLBs to share with teachers who needed support adapting lesson plans, particularly those early in their career who understood that UDL mattered but weren’t quite sure what it looked like in practice. It’s not a resource generator. Its job is to interrogate a lesson design against the three UDL networks, asking questions that surface whether a design anticipates variability or just responds to it. The questions it generates are often more useful than any resource it might produce.

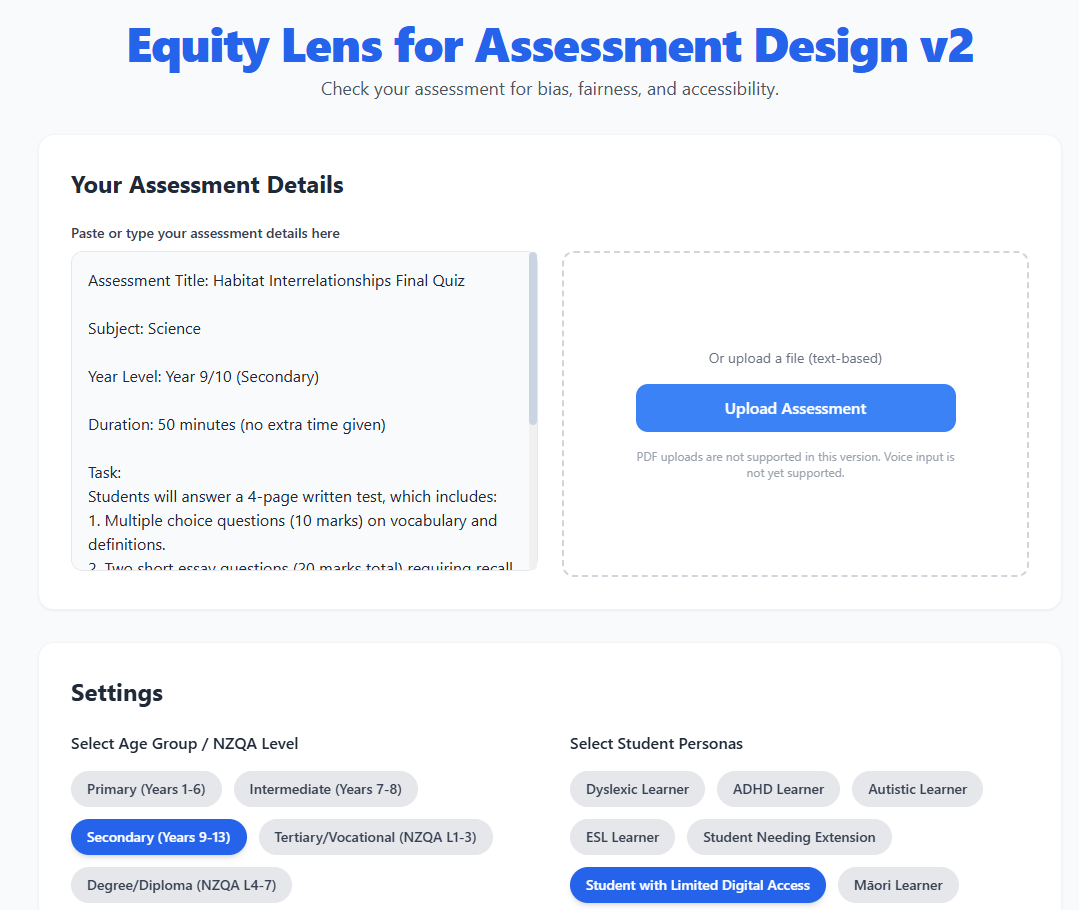

The second is the Inclusive AI Assessment Checker, developed through the Ako Aotearoa project. The idea came out of an AiEdCoP session where Ryan Noonan demonstrated Canva Code, and I started thinking about what it would look like to view a lesson plan through the eyes of different learners (I actually re-built it using Gemini in the end- see earlier point about API key!). It lets educators paste a lesson or assessment task and see it through the perspectives of diverse learner personas — Māori learners, neurodivergent learners, ESOL learners — before they teach.

Those personas are provocations for reflection, not substitutes for real learner voice. When actual community members were involved in the co-design work, they surfaced needs that no AI-generated persona had anticipated, things rooted in lived experience, whakapapa, and community context that a language model simply can’t replicate. The tool is a starting point. Then go and talk with your learners.

The question that matters

One voice from our educator research keeps coming back to me: “Our school is poorly resourced in all areas. Our students are in survival mode. AI is a wealthy school problem.”

That’s worth sitting alongside the marketing. Because the pitch for AI-assisted differentiation is everywhere right now, faster resources, personalised pathways, adaptive tools. And some of it is genuinely useful. But educators are being sold specific tools when what most of them actually need is a framework for thinking about design. A tool that generates a simplified text faster doesn’t ask whether the original design was excluding anyone. It just makes the workaround more convenient.

UDL isn't a tool. It's the question you ask before you open one. The tools will keep coming and the pitches will get better, but the question underneath all of it stays the same: are you designing for variability, or just fixing it faster? If you want to work through that question with a community of educators, AiEdCoP is a good place to do it, and if you’d like to watch the session where I discussed this with our members you’ll find that here.

Gander, T., & Shaw, B. (2025). Navigating the AI landscape: Educator insights and pedagogical implications in New Zealand. Journal of Technology and Teacher Education, 32(3), 1–27.

Gander, T. (2025). InclusiveAI: Empowering Aotearoa, an inclusive approach to AI literacy in tertiary education (Final Report, Project 45195). Ako Aotearoa Research and Innovation Agenda.